- Blog

- Good car mods for euro truck simulator 2

- Doki doki literature club folder guide

- Youtube full movie the last witch hunter

- R version pointing to anaconda java rather than jdk on mac

- Download icarus vst

- Intel amt drivers for dell optiplex 760

- My excel keeps crashing

- Shahrzad series part 1

- Universal adobe patcher reddit

- How to update final draft 10

- Petz dogz 5 and catz 5 free download

- How to subtract using relative cell reference excel 2013

- The mummy hindi hd movie download

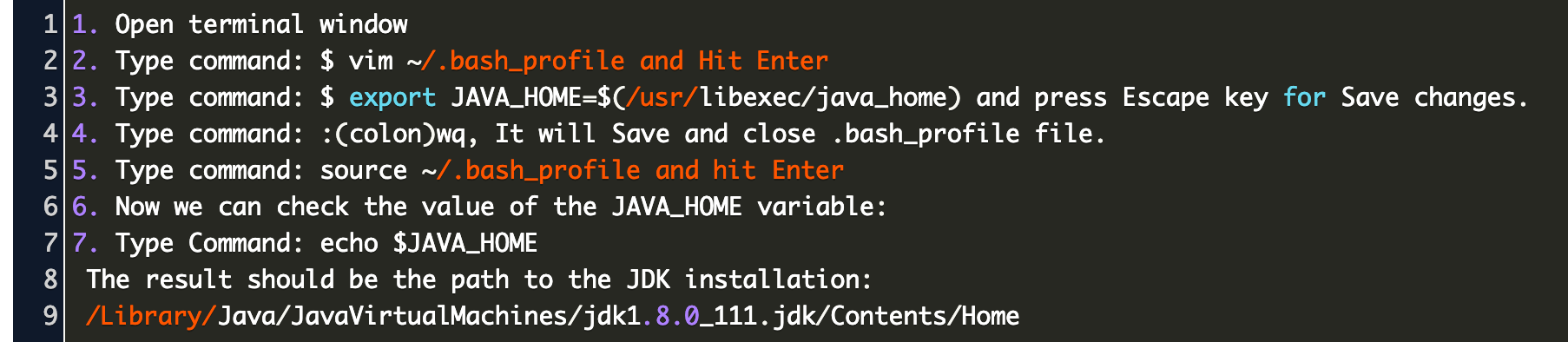

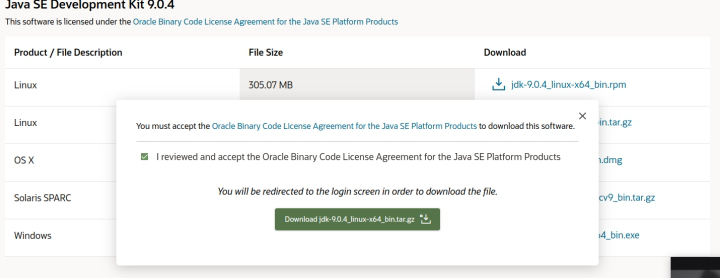

- R VERSION POINTING TO ANACONDA JAVA RATHER THAN JDK ON MAC HOW TO

- R VERSION POINTING TO ANACONDA JAVA RATHER THAN JDK ON MAC MAC OS

Pyspark - Check out how to install pyspark in Python 3. pyspark profile, run: jupyter notebook -profile=pyspark. Use Python SQL scripts in SQL Notebooks of Azure Data Studio SQL Notebook is a version or reference from the Jupyter notebook. Simple create a docker-compose.yml, paste the following code, then run docker-compose up. Execute the pyspark code in the HDInsights. export PYSPARK_DRIVER_PYTHON='jupyter' export PYSPARK_DRIVER_PYTHON_OPTS='notebook -no … By opening the Jupyter-Notebook folder, it becomes your workspace within Visual Studio Code. conda install -c conda-forge findspark or. install sklearn in jupyter notebook Install PySpark. How to use Synapse Type below code in CMD/Command Prompt. 3- open jupyter notebook in usual way nothing special.

You can configure Anaconda to work with Spark jobs in three ways: with the “spark-submit” command, or with Jupyter Notebooks and Cloudera CDH, or with Jupyter Notebooks and Hortonworks HDP. With this tutorial we'll install PySpark and run it locally in both the shell and Jupyter Notebook. Now visit the Spark downloads page.Select the latest Spark release, a prebuilt package for Hadoop, and download it directly. Of course, you will also need Python (I recommend > Python 3.5 from Anaconda).

R VERSION POINTING TO ANACONDA JAVA RATHER THAN JDK ON MAC MAC OS

I am using Mac OS and Anaconda as the Python distribution.

PySpark Here is a blog to show how to take advantage of this powerful tool as you learn Spark! About Jupyter Notebooks¶ The ArcGIS API for Python can be used from any application that can execute Python code. In case if you wanted to run pandas, use How to Run Pandas with Anaconda & Jupyter notebook Setting Up a PySpark.SQL Session 1) Creating a Jupyter Notebook in VSCode. Then, run some Spark code like this snippet: Become a PySpark Master. Notebooks are also widely used in data preparation, data visualization, machine learning, and other Big Data scenarios. Jupyter notebook with Spark master, 2 workers and a. Launch Jupyter notebooks with pyspark on an EMR Cluster. The promise of a big data framework like Spark is realized only when it runs on a cluster with a large number of nodes. You can customize the ipython or jupyter commands by setting PYSPARK_DRIVER_PYTHON_OPTS.